What makes the matrix sign function so interesting and useful is that it can be computed directly without first computing eigenvalues or eigenvectore of  . Roberts noted that the iteration

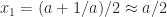

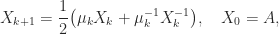

. Roberts noted that the iteration

converges quadratically to  . This iteration is Newton’s method applied to the equation

. This iteration is Newton’s method applied to the equation  , with starting matrix

, with starting matrix  . It is one of the rare circumstances in which explicitly inverting matrices is justified!

. It is one of the rare circumstances in which explicitly inverting matrices is justified!

Various other iterations are available for computing  . A matrix multiplication-based iteration is the Newton–Schulz iteration

. A matrix multiplication-based iteration is the Newton–Schulz iteration

This iteration is quadratically convergent if  for some subordinate matrix norm. The Newton–Schulz iteration is the

for some subordinate matrix norm. The Newton–Schulz iteration is the ![[1/0]](https://s0.wp.com/latex.php?latex=%5B1%2F0%5D&bg=ffffff&fg=222222&s=0&c=20201002) member of a Padé family of rational iterations

member of a Padé family of rational iterations

where  is the

is the ![[\ell/m]](https://s0.wp.com/latex.php?latex=%5B%5Cell%2Fm%5D&bg=ffffff&fg=222222&s=0&c=20201002) Padé approximant to

Padé approximant to  (

( and

and  are polynomials of degrees at most

are polynomials of degrees at most  and

and  , respectively). The iteration is globally convergent to

, respectively). The iteration is globally convergent to  for

for  and

and  , and for

, and for  it converges when

it converges when  , with order of convergence

, with order of convergence  in all cases.

in all cases.

Although the rate of convergence of these iterations is at least quadratic, and hence asymptotically fast, it can be slow initially. Indeed for  , if

, if  then the Newton iteration computes

then the Newton iteration computes  , and so the early iterations make slow progress towards

, and so the early iterations make slow progress towards  . Fortunately, it is possible to speed up convergence with the use of scale parameters. The Newton iteration can be replaced by

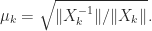

. Fortunately, it is possible to speed up convergence with the use of scale parameters. The Newton iteration can be replaced by

with, for example,

This parameter  can be computed at no extra cost.

can be computed at no extra cost.

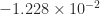

As an example, we took A = gallery('lotkin',4), which has eigenvalues  ,

,  ,

,  , and

, and  to four significant figures. After six iterations of the unscaled Newton iteration

to four significant figures. After six iterations of the unscaled Newton iteration  had an eigenvalue

had an eigenvalue  , showing that

, showing that  is far from

is far from  , which has eigenvalues

, which has eigenvalues  . Yet when scaled by

. Yet when scaled by  (using the

(using the  -norm), after six iterations all the eigenvalues of

-norm), after six iterations all the eigenvalues of  were within distance

were within distance  of

of  , and the iteration had converged to within this tolerance.

, and the iteration had converged to within this tolerance.

The Matrix Computation Toolbox contains a MATLAB function signm that computes the matrix sign function. It computes a Schur decomposition then obtains the sign of the triangular Schur factor by a finite recurrence. This function is too expensive for use in applications, but is reliable and is useful for experimentation.