An upper bidiagonal matrix

depends on just parameters, which appear on the main diagonal and the superdiagonal.

Such matrices arise commonly, for example as the factor and the transpose of the

factor in the LU factorization of tridiagonal matrices, and as the intermediate matrix in the computation of the singular value decomposition by the Golub–Reinsch algorithm.

Bidiagonal matrices have many interesting properties, especially when we consider products of them. We describe some of their properties here.

Inverse

Consider the inverse of a nonsingular bidiagonal matrix:

Notice that every element in the upper triangle is a product of off-diagonal elements of and inverses of diagonal elements, that the superdiagonals have alternating signs attached, and that there are no additions. These properties hold for general

.

The consequences include:

- the absolute values of the elements of

depend on

, as there is no cancellation in the formulas for

(unlike for general triangular matrices),

- the singular values of

depend only on

(less obvious, and provable by a scaling argument).

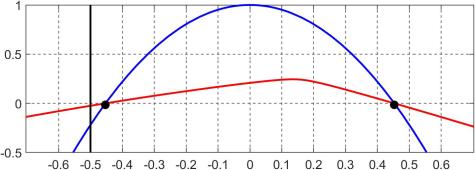

Matrix Function

There is a simple formula for any function of , not just the inverse. Here, the

term is a divided difference.

Theorem 1. If

is upper bidiagonal then

is upper triangular with

and

![\notag f_{ij} = b_{i,i+1} b_{i+1,i+2} \dots b_{j-1,j}\, f[b_{ii},b_{i+1,i+1}, \dots, b_{jj}], \quad j > i.](https://s0.wp.com/latex.php?latex=%5Cnotag+++++++f_%7Bij%7D+%3D+b_%7Bi%2Ci%2B1%7D+b_%7Bi%2B1%2Ci%2B2%7D+%5Cdots+b_%7Bj-1%2Cj%7D%5C%2C++++++++++++++++f%5Bb_%7Bii%7D%2Cb_%7Bi%2B1%2Ci%2B1%7D%2C+%5Cdots%2C+b_%7Bjj%7D%5D%2C+%5Cquad+j+%3E+i.+&bg=ffffff&fg=222222&s=0&c=20201002)

A special case is the formula for a function of an Jordan block:

Condition Number of Product of Bidiagonals

Perhaps the most interesting result is that if we have a factorization of a matrix into a product of

bidiagonal matrices then we can compute the condition number

in

flops and to high accuracy in floating-point arithmetic. Every matrix has such a factorization and in some cases the entries of the factors are known as explicit formulas. For example, we can compute the condition number of the Hilbert matrix

to high accuracy in

flops.

| Relative error for fast algorithm | ||

|---|---|---|

| 4 | 2.84e4 | 1.28e-16 |

| 8 | 3.39e10 | 2.25e-16 |

| 16 | 5.06e22 | 3.67e-17 |

| 32 | 1.36e47 | 1.75e-15 |

| 64 | 1.10e96 | 1.77e-15 |

Any attempt to compute by explicitly forming

is doomed to failure by the rounding errors incurred in the formation, no matter what algorithm is used for the computation.

All this analysis, and much more, is contained in

- Nicholas J. Higham, The Power of Bidiagonal Matrices, ArXiv:2311.06609, November 2023.

which is based on the Hans Schneider Prize talk that I gave at The 25th Conference of the International Linear Algebra Society, Madrid, Spain, June 12-16, 2023.

A specific example, in MATLAB, is

A specific example, in MATLAB, is