An matrix is normal if

, that is, if

commutes with its conjugate transpose. Although the definition is simple to state, its significance is not immediately obvious.

The definition says that the inner product of the th and

th columns equals the inner product of the

th and

th rows for all

and

. For

this means that the

th row and the

th column have the same

-norm for all

. This fact can easily be used to show that a normal triangular matrix must be diagonal. It then follows from the Schur decomposition that

is normal if it is unitarily diagonalizable:

for some unitary

and diagonal

, where

contains the eigenvalues of

on the diagonal. Thus the normal matrices are those with a complete set of orthonormal eigenvectors.

For a general diagonalizable matrix, , the condition number

can be arbitrarily large, but for a normal matrix

can be taken to have 2-norm condition number

. This property makes normal matrices well-behaved for numerical computation.

Many equivalent conditions to being normal are known: seventy are given by Grone et al. (1987) and a further nineteen are given by Elsner and Ikramov (1998).

The normal matrices include the classes of matrix given in this table:

| Real | Complex |

|---|---|

| Diagonal | Diagonal |

| Symmetric | Hermitian |

| Skew-symmetric | Skew-Hermitian |

| Orthogonal | Unitary |

| Circulant | Circulant |

Circulant matrices are Toeplitz matrices in which the diagonals wrap around:

They are diagonalized by a unitary matrix known as the discrete Fourier transform matrix, which has element

.

A normal matrix is not necessarily of the form given in the table, even for . Indeed, a

normal matrix must have one of the forms

The first matrix is symmetric. The second matrix is of the form , where

is skew-symmetric and satisfies

, and it has eigenvalues

.

It is natural to ask what the commutator can look like when

is not normal. One immediate observation is that

has zero trace, so its eigenvalues sum to zero, implying that

is an indefinite Hermitian matrix if it is not zero. Since an indefinite matrix has at least two different nonzero eigenvalues,

cannot be of rank

.

In the polar decomposition , where

is unitary and

is Hermitian positive semidefinite, the polar factors commute if and only if

is normal.

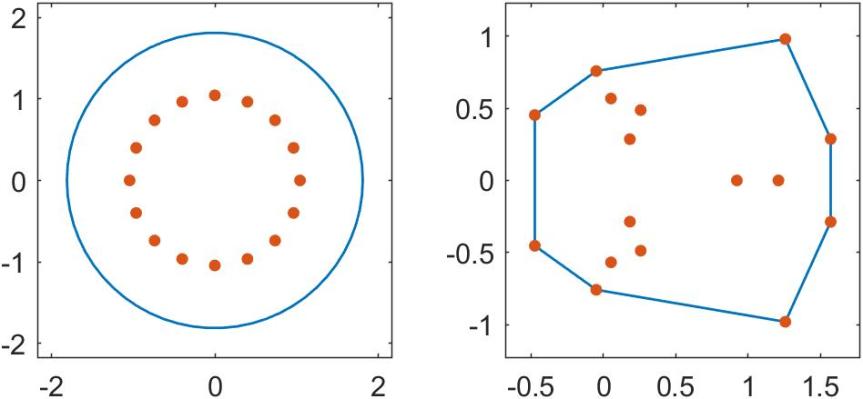

The field of values, also known as the numerical range, is defined for by

The set is compact and convex (a nontrivial property proved by Toeplitz and Hausdorff), and it contains all the eigenvalues of

. Normal matrices have the property that the field of values is the convex hull of the eigenvalues. The next figure illustrates two fields of values, with the eigenvalues plotted as dots. The one on the left is for the nonnormal matrix

gallery('smoke',16) and that on the right is for the circulant matrix gallery('circul',x) with x constructed as x = randn(16,1); x = x/norm(x).

Measures of Nonnormality

How can we measure the degree of nonnormality of a matrix? Let have the Schur decomposition

, where

is unitary and

is upper triangular, and write

, where

is diagonal with the eigenvalues of

on its diagonal and

is strictly upper triangular. If

is normal then

is zero, so

is a natural measure of how far

is from being normal. While

depends on

(which is not unique), its Frobenius norm does not, since

. Accordingly, Henrici defined the departure from normality by

Henrici (1962) derived an upper bound for and Elsner and Paardekooper (1987) derived a lower bound, both based on the commutator:

The distance to normality is

This quantity can be computed by an algorithm of Ruhe (1987). It is trivially bounded above by and is also bounded below by a multiple of it (László, 1994):

Normal matrices are a particular class of diagonalizable matrices. For diagonalizable matrices various bounds are available that depend on the condition number of a diagonalizing transformation. Since such a transformation is not unique, we take a diagonalization ,

, with

having minimal 2-norm condition number:

Here are some examples of such bounds. We denote by the spectral radius of

, the largest absolute value of any eigenvalue of

.

- By taking norms in the eigenvalue-eigenvector equation

we obtain

. Taking norms in

gives

. Hence

- If

has singular values

and its eigenvalues are ordered

, then (Ruhe, 1975)

Note that for

the previous upper bound is sharper.

- For any real

,

- For any function

defined on the spectrum of

,

For normal we can take

unitary and so all these bounds are equalities. The condition number

can therefore be regarded as another measure of non-normality, as quantified by these bounds.

References

This is a minimal set of references, which contain further useful references within.

- L. Elsner and Kh.D. Ikramov, Normal Matrices: An Update, Linear Algebra Appl 285, 291–303, 1998.

- L. Elsner and M. H. C. Paardekooper, On Measures of Nonnormality of Matrices, Linear Algebra Appl. 92, 107–124, 1987.

- Robert Grone, Charles Johnson, Eduardo Sa, and Henry Wolkowicz, Normal Matrices, Linear Algebra Appl. 87, 213–225, 1987

- Peter Henrici, Bounds for Iterates, Inverses, Spectral Variation and Fields of Values of Non-Normal Matrices, Numer. Math. 4, 24–40, 1962.

- Lajos László, An Attainable Lower Bound for the Best Normal Approximation, SIAM J. Matrix Anal. Appl. 15 (3), 1035–1043, 1994.

- Axel Ruhe, On the Closeness of Eigenvalues and Singular Values Of Almost Normal Matrices, Linear Algebra Appl. 11, 87–94, 1975.

- Axel Ruhe, Closest Normal Matrix Finally Found!, BIT 27, 585–598, 1987.