Back in 1980 there were not many up to date books on numerical linear algebra. Stewart’s Introduction to Matrix Computations (1973) was a popular textbook, and was the text for the final year undergraduate course that I took on the subject. Parlett’s The Symmetric Eigenvalue Problem (1980) was a graduate level treatment of the symmetric eigenvalue problem. And Wilkinson’s The Algebraic Eigenvalue Problem (1965) was still the bible of numerical linear algebra, albeit already somewhat out of date due the fast moving research developments since it was published.

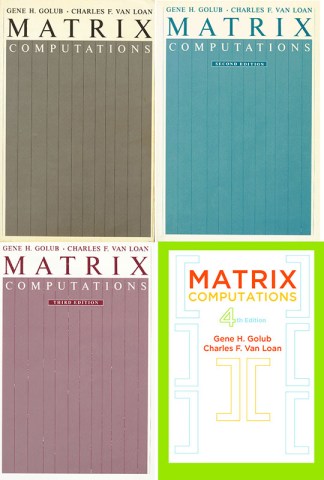

While an MSc student, I heard about the impending publication of a new book on matrix computations by Golub and Van Loan. I pre-ordered a copy and in spring 1983 received one of the first copies in the UK. The book was a revelation. It presented a completely fresh and up to date perspective on the subject. Some of the most exciting features were

- extensive use of pseudocode, with MATLAB-style indexing notation, to describe algorithms,

- the use of flops to measure computational cost,

- emphasis on the use of the SVD,

- modern presentation of rounding error analysis, with rounding error bounds given for each algorithm,

- systematic treatment of the conjugate gradient and Lanczos methods,

- coverage of topics not found in earlier books, such as condition estimation, generalized SVD, and total least squares,

- very lively writing style.

I studied the book in great detail and learned a huge amount from it.

A second edition was published in 1989. It was written while Charlie Van Loan was in the UK on sabbatical and I was spending a year at Cornell (Charlie’s home university). I had the opportunity to read and comment on draft chapters. The second edition maintained all the material from the first and added new chapters on matrix multiplication (and the relevant machine architecture considerations) and parallel algorithms, and it was typeset in LaTeX for the first time. The term flop was redefined so that a+b*c represents two flops (as it does today) instead of one as in the first edition. A number of other changes were introduced to address a criticism in some reviews of the first edition that the book was rather terse and fast-paced for use as a course textbook.

A third edition followed in 1996. After a 17 year gap the fourth edition has just been published. Work on this edition began following the untimely death of Gene Golub in 2007. Some statistics indicate the development of the book:

| Edition | Year | Number of pages | Pages of master bibliography |

|---|---|---|---|

| First | 1983 | 472 | 25 |

| Second | 1989 | 642 | 34 |

| Third | 1996 | 694 | 50 |

| Fourth | 2013 | 756 |

The master bibliography of the fourth edition is not printed in the book but is downloadable from the book’s web page.

What is Different About the Fourth Edition?

The new edition is physically larger than its predecessors, with a text width of 13 cm versus 11.5 cm in the last edition, so the content is increased by more than the page count would suggest. Moreover, the paper is extremely high quality, and this makes the book bigger and heavier than you would expect. I bought the hardback, because I know from experience that the softback of all three previous editions did not stand up well to heavy use. The image shows the third and fourth editions along with Horn and Johnson’s Matrix Analysis (second edition, 2013) and my Accuracy and Stability of Numerical Algorithms (second edition, 2002).

A number of new topics are included, of which I would pick out

- fast transforms

- Hamiltonian and product eigenvalue problems

- large-scale SVD

- multigrid

- tensor computations

I like the statement in the preface that “References that are historically important have been retained because old ideas have a way of resurrecting themselves.” This is of course particularly true as regards methods suitable for high-performance computing.

Lists of relevant LAPACK codes at the start of each chapter have been removed, as have many of the small, illustrative numerical examples, which are replaced by MATLAB codes to be made available on the book’s web page.

The fourth edition remains the best general reference on matrix computations and a must-have for any serious researcher in the field. A big difference from 1983, when the first edition appeared, is that now a separate research monograph is available covering almost every topic in the book (and due reference is made to 28 such “Global References”). But Matrix Computations brings together and unifies a wide variety of topics in one place.

2013 has been a good year for books on matrices and approximation, with the publication of a second edition of Horn and Johnson’s Matrix Analysis, Trefethen’s Approximation Theory and Approximation Practice, and now this very welcome fourth edition of Golub and Van Loan. It is available from the usual sources as well as from SIAM. Consider the Kindle edition to save your back. You can still have it signed!

Mary Aprahamian presented a new matrix function called the

Mary Aprahamian presented a new matrix function called the