Rounding is the transformation of a number expressed in a particular base to a number with fewer digits. For example, in base 10 we might round the number to

, which can be described as rounding to three significant digits or two decimal places. Rounding does not change a number if it already has the requisite number of digits.

The three main uses of rounding are

- to simplify a number in base 10 for human consumption,

- to represent a constant such as

,

, or

in floating-point arithmetic,

- to convert the result of an elementary operation (an addition, multiplication, or division) on floating-point numbers back into a floating-point number.

The floating-point numbers may be those used on a computer (base 2) or a pocket calculator (base 10).

Rounding can be done in several ways.

Round to Nearest

The most common form of rounding is to round to the nearest number with the specified number of significant digits or decimal places. In the example above, the two nearest numbers to with three significant digits are

and

, at distances

and

, respectively, from

. The nearest of these two numbers,

, is chosen.

What happens if the two candidate numbers are equally close? We need a rule for breaking the tie. The most common choices are

- round to even: choose the number with an even last digit,

- round to odd: choose the number with an odd last digit.

If we round to two significant digits, the result is

with round to even and

with round to odd.

There are several reasons for preferring to break ties with round to even.

- In bases 2 and 10 a subsequent rounding to one less place does not involve a tie. Thus we have the rounding sequence

,

,

,

with round to even, but

,

,

,

with round to odd.

- For base 2, round to even results in integers more often, as a consequence of producing a zero least significant bit.

- In base 10, after round to even a rounded number can be halved without error.

IEEE Standard 745 for floating-point arithmetic supports three tie-breaking methods: round to even (the default), round to the number with larger magnitude, and round towards zero (introduced in the 2019 revision for use with the standard’s new augmented operations).

The tie-breaking rule taught in UK schools, for decimal arithmetic, is to round up on ties. The rounding rule then becomes: round down if the first digit to be dropped is or less and otherwise round up.

Round Towards Plus or Minus Infinity

Another possibility is to round to the next larger number with the specified number of digits, which is known as round towards plus infinity (or round up). Then rounds to

and

rounds to

. Similarly, with round towards minus infinity (or round down) we round to the next smaller number, so that

rounds to

and

rounds to

.

This form of rounding is used in interval arithmetic, where an interval guaranteed to contain the exact result is computed in floating-point arithmetic.

Round Towards Zero

In this form of rounding we round towards zero, that is, we round down if

and round it up if

. This is also known as chopping, or truncation.

Stochastic Rounding

Stochastic rounding was proposed in the 1950s and is attracting renewed interest, especially in machine learning. It rounds up or down randomly. It come in two forms. The first form rounds up or down with equal probability . To describe the second form, let

be the given number and let

be the candidates for the result of rounding. We round up to

with probability

and down to

with probability

; note that these probabilities sum to

. In floating-point arithmetic, stochastic rounding overcomes the problem that can arise in summing a set of numbers whereby some individual summands are so small that they do not contribute to the computed sum even though they contribute to the exact sum.

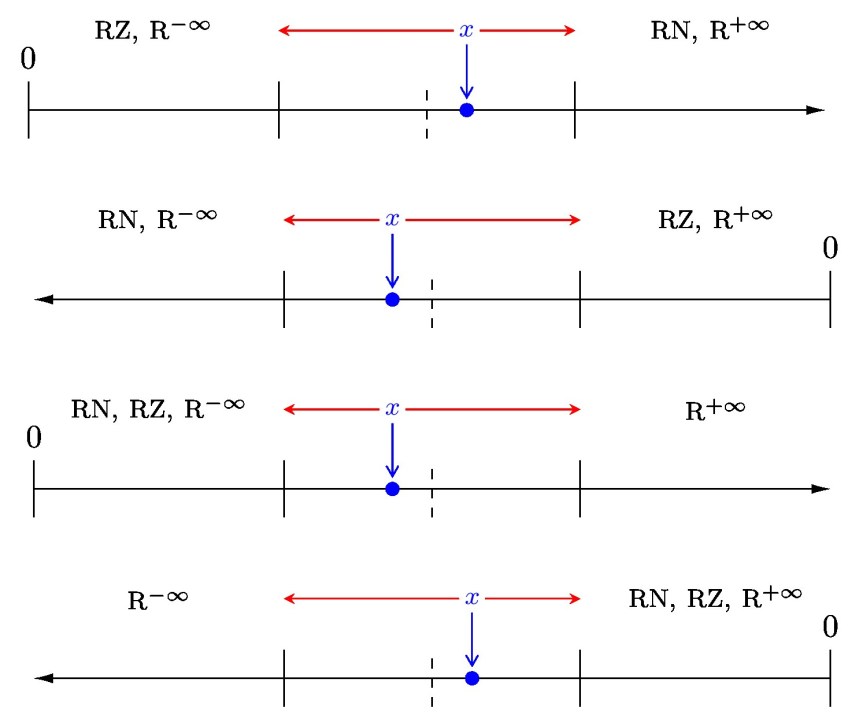

The diagrams below illustrate round to nearest (RN), round towards zero (RZ), round towards plus infinity (), and round towards minus infinity (

). They show the number

to be rounded in four different configurations with respect to the origin and the midpoint (drawn with a dotted line) of the interval between the two candidate rounding results (drawn with a solid line). The red arrows point to the two possible results of rounding.

Real World Rounding

The European Commission’s rules for converting currencies of Member States into Euros (from the time of the creation of the Euro) specify that “half-way results are rounded up” (rounded to plus infinity). (PDF link)

The International Association of Athletics Federations (IAAF) specifies in Rule 165 of its Competition Rules 2018–2019 that all times of track races up to 10,000m should be recorded to a precision of 0.01 second, with rounding to plus infinity. In 2006, the athlete Justin Gatlin was wrongly credited with breaking the 100m world record when his official time of 9.766 seconds was rounded down to 9.76 seconds. Under the IAAF rules it should have been rounded up to 9.77 seconds, matching the world record set by Asafa Powell the year before. The error was discovered several days after the race.

In meteorology, rounding to nearest with ties broken by rounding to odd is favoured. Hunt suggests that the reason is to avoid falsely indicating that it is freezing. Thus C and

F round to

C and

F instead of

C and

F.

Useful Tool

The package siunitx has the ability to round numbers (in base 10) to a specified number of decimal places or significant figures.

References

This is a minimal set of references, which contain further useful references within.

- Michael P. Connolly, Nicholas J. Higham, and Theo Mary, Stochastic Rounding and Its Probabilistic Backward Error Analysis, MIMS EPrint 2020.12, Manchester Institute for Mathematical Sciences, The University of Manchester, UK, April 2020.

- Nicholas J. Higham, Accuracy and Stability of Numerical Algorithms, second edition, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2002.

- Julian Hunt, Rounding and Other Approximations for Measurements, Records and Targets, Mathematics Today 33, 73–77, 1997.

- IEEE Standard for Floating-Point Arithmetic, IEEE Std 754-2019 (Revision of IEEE 754-2008), IEEE Computer Society, New York, 2019.

Related Blog Posts

- What Is Floating-Point Arithmetic? (2020)—forthcoming

- What Is IEEE Standard Arithmetic? (2020)—forthcoming

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.