A condition number of a problem measures the sensitivity of the solution to small perturbations in the input data. The condition number depends on the problem and the input data, on the norm used to measure size, and on whether perturbations are measured in an absolute or a relative sense. The problem is defined by a function, which may be known explicitly or may be only implicitly defined (as when the problem is to solve an equation).

The most well known example of a condition number is the condition number of a nonsingular square matrix , which is

. More correctly, this is the condition number with respect to inversion, because a relative change to

of norm

can change

by a relative amount as much as, but no more than, about

for small

. The same quantity

is also the condition number for a linear system

(exactly if

is the data, but only approximately if both

and

are the data).

It is easy to see that for any norm for which

(most common norms, but not the Frobenius norm, have this property) and that

tends to infinity as

tends to singularity.

A general definition of (relative) condition number, for a function from

to

, is

Taking a small, nonzero , we have

for small , with approximate equality for some

.

An explicit expression for can be given in terms of the Jacobian matrix,

:

We give two examples.

- If

is a scalar function then

, so

. Hence, for example,

.

- If

is a simple (non-repeated) root of the polynomial

then the data is the vector of coefficients

. It can be shown that the condition number of the root

is, for the

-norm,

A general theory of condition numbers was developed by Rice (1966).

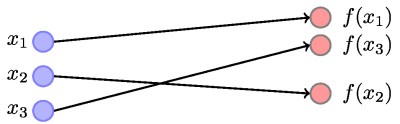

A problem is said to be well conditioned if the condition number is small and ill conditioned if the condition number is large. The meaning of “small” and “large” depends on the problem and the context. This diagram illustrates a well-conditioned function : small changes in

produce small changes in

.

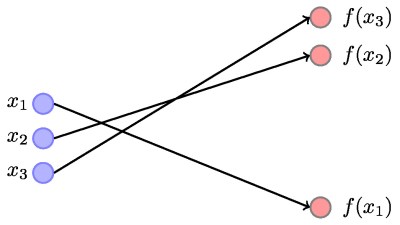

The next diagram depicts an ill-conditioned function : small changes in

can produce large changes in

(but do not necessarily do so, as the closeness of

and

illustrates).

Here are a few key points about condition numbers.

- Even though an explicit expression may be available for it, computing

is usually as expensive as computing

, so a lot of research has focused on obtaining inexpensive estimates of the condition number or bounds for it.

- While

, it is not true for all functions that

is bounded below by

.

- For a range of functions that includes the matrix inverse, matrix eigenvalues, and a root of a polynomial, it is known that the condition number is the reciprocal of the relative distance to the nearest singular problem (one with an infinite condition number).

- As the condition number is itself a function, one can ask: What is the condition number of the condition number? For a variety of problems, including those mentioned in the previous point, the condition number of the condition number is (approximately) the condition number!

References

This is a minimal set of references, which contain further useful references within.

- James W. Demmel, On Condition numbers and the distance to the nearest ill-posed problem, Numer. Math. 51, 251–289, 1987.

- Desmond J.Higham, Condition numbers and their condition numbers, Linear Algebra Appl. 214, 193–213, 1995.

- Nicholas J. Higham, Accuracy and Stability of Numerical Algorithms, second edition, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2002.

- Nicholas J. Higham, Functions of Matrices: Theory and Computation, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2008. (Chapter 3).

- Nicholas J. Higham and Samuel D. Relton, Higher order Fréchet derivatives of matrix functions and the level-

condition number, SIAM J. Matrix Anal. Appl. 35(3), 1019–1037, 2014.

- John R. Rice, A theory of condition, SIAM J. Numer. Anal. 3(2), 287–310, 1966.

Related Blog Posts

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.

Thank you for your “What is” blog.

As far as I understand, the usual way to compute the condition number for the matrix A is to take the ratio of the largest to the smallest singular value of A. How is this related to “the reciprocal of the relative distance to the nearest singular problem”?

One more question: if A is the diagonal matrix with 1 and 1e-10 on its diagonal, A’s condition number is 1e10, but the bound on cond(f,x) way overestimates the error computing the solution of Ax=b. Can you comment on whether there exists a tighter estimate of the sensitivity to perturbations?

What you’re referring to is the matrix condition number with respect to inversion for the 2-norm. The reciprocal of that is the relative distance in the 2-norm to the nearest singular matrix.

On the second point, componentwise perturbaton theory and componentwise condition numbers handle this. See Chapter 7 of my book “Accuracy and Stability of Numerical Algorithms”.

What is the nearest singular matrix of [1 0; 0 1/2]? Is there an explicit formula for its computation?

Dear Sir!

Thank you so much for your blog. Using the condition number cond$(f,x)$ of the function $f$ at $x$ , we can find the bound for relative forward error $\frac{\|f(x+\Delta x)-f(x)\|}{\|f(x)\|}$ if the backward error $\Delta x$ belongs to some neighborhood $N(x)$ of $x$. In genearl we don’t know the size of $N(x)$. Let $\Delta x$ be a backward error of an approximated solution $f(x+\Delta x)$. How can one check that $\Delta x$ belongs to $N(x)$ or not ?

Thank you,

Kannan R.

his isn’t normally a problem. If the backward error is so large that a perturbation expansion is not valid then that usually means we have a very poor approximate solution. In any case, there is often a computable expression for \Delta x and so we can check its size