For a vector with

elements the sample variance is

, where the sample mean is

. An alternative formula often given in textbooks is

. This second formula has the advantage that it can be computed with just one pass through the data, whereas the first formula requires two passes. However, the one-pass formula can suffer damaging subtractive cancellation, making it numerically unstable. When I wrote my book Accuracy and Stability of Numerical Algorithms I found that several pocket calculators appeared to use the one-pass formula.

How do spreadsheets apps available in web browsers and hosted in the cloud fare on computations such as this? I used Google Sheets to compute the standard deviation of vectors of the form (Google Sheets does not seem to have a built-in function for the sample variance; the standard deviation is the square root of the sample variance). Here is what I found. (The spreadsheet that produced these results is available as this xlsx file. Note that if you click on that link it will probably load into Excel and display the correct result.)

| Exact standard deviation | Google’s result | |

|---|---|---|

| 1 | 1 | |

| 1 | 0 |

The incorrect result 0 for is what I would expect from the one-pass formula in IEEE double precision arithmetic, which has the equivalent of about 16 significant decimal digits of precision, since

and

are both about

and so there is not enough precision to retain the difference (which is equal to 2). A computation in MATLAB verifies that the one-pass formula returns 0 in IEEE double precision arithmetic.

It seems that Google Sheets is using IEEE double precision arithmetic internally, because the expression evaluates to 2.2E-16. So it appears that Google may be using the one-pass formula.

This use of the unstable formula is deeply unsatisfactory, but it is just the tip of the iceberg. In a recent paper Spreadsheets in the Cloud—Not Ready Yet, Bruce McCullough and Talha Yalta show that Google Sheets, Excel Web App and Zoho Sheet all fail on various members of a set of “sanity tests”. This might not be too surprising if you are aware of McCullough’s earlier work in which he found errors in several versions of Microsoft Excel.

However, spreadsheets in the cloud bring further complications, as noted by McCullough and Yalta:

- These spreadsheets apps do not carry version information and the software can be changed by the provider at any time without announcement. It is therefore impossible to reproduce results computed previously.

- The hardware and software environment on which the software is running is not specified, which adds another level of irreproducibility.

- McCullough and Yalta found that the Excel Web App could produce different output from Excel 2010. Anyone moving a spreadsheet between the two applications could be in for a surprise.

The conclusion: use spreadsheets in the cloud at your peril! In fact, I avoid spreadsheets altogether. Anything I want to do can be done better in MATLAB, LaTeX or Emacs ORG mode.

The conference venue. Note the residual snow, which fortunately did not fall in any serious amounts during the conference.

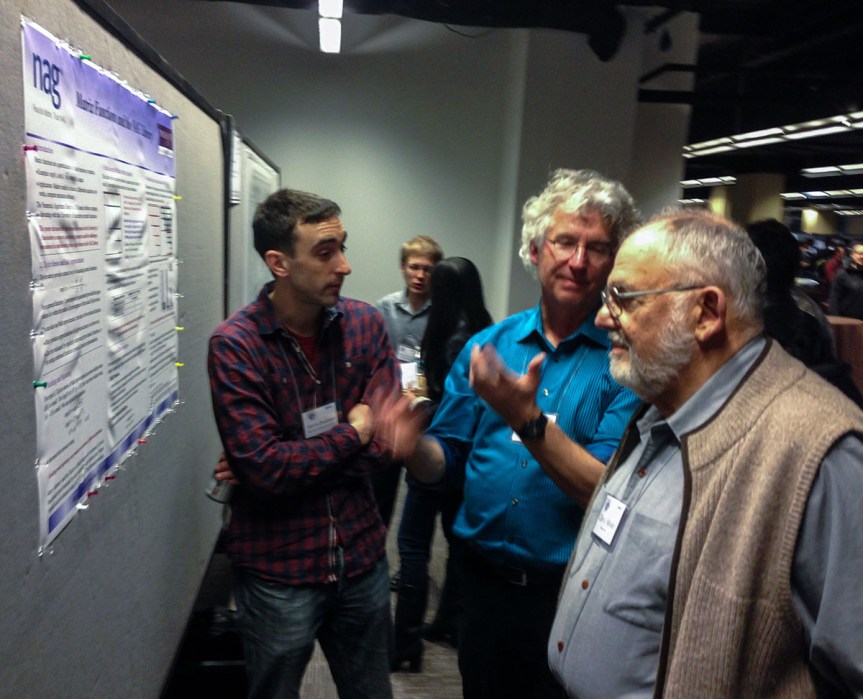

The conference venue. Note the residual snow, which fortunately did not fall in any serious amounts during the conference.  The poster session of about 65 posters was preceded by a poster blitz (1 minute presentations) and was accompanied by an excellent dessert. This photo shows Edvin Deadman (University of Manchester and NAG Ltd.) discussing his poster on Matrix Functions and the NAG Library with Cleve Moler and Charlie Van Loan (authors of the classic

The poster session of about 65 posters was preceded by a poster blitz (1 minute presentations) and was accompanied by an excellent dessert. This photo shows Edvin Deadman (University of Manchester and NAG Ltd.) discussing his poster on Matrix Functions and the NAG Library with Cleve Moler and Charlie Van Loan (authors of the classic  Josh Bloom’s (UC Berkeley) invited presentation

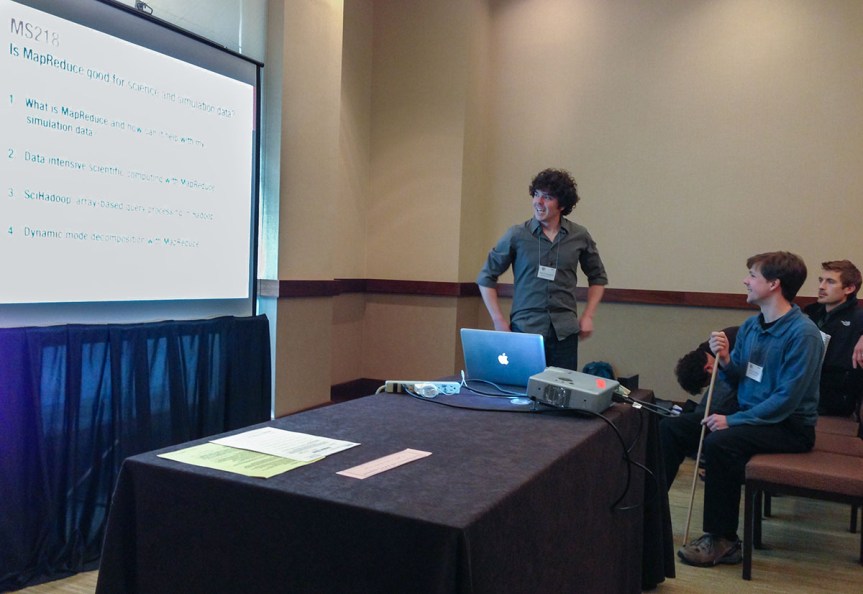

Josh Bloom’s (UC Berkeley) invited presentation  It was interesting to see MapReduce being used to implement numerical algorithms, notably in the minisymposium

It was interesting to see MapReduce being used to implement numerical algorithms, notably in the minisymposium  Here is the lunchtime panel

Here is the lunchtime panel