The Kronecker product of two matrices and

(also called the tensor product) is the

matrix1

In other words, is the block

matrix with

block

. For example,

Notice that the entries of comprise every possible product

, which is not the case for the usual matrix product

when it is defined. Indeed if

and

are

then

is

and contains sums of

of the products

,

is

and contains all

products

.

Two key properties of the Kronecker product are

The second equality implies that when is an eigenvector of

with eigenvalue

and

is an eigenvector of

with eigenvalue

then

so that is an eigenvalue of

with eigenvector

. In fact, the

eigenvalues of

are precisely

for

and

.

Kronecker product structure arises in image deblurring models in which the blur is separable, that is, the blur in the horizontal direction can be separated from the blur in the vertical direction. Kronecker products also arise in the construction of Hadamard matrices. Recall that a Hadamard matrix is a matrix of s whose rows and columns are mutually orthogonal. If

is an

Hadamard matrix then

is a Hadamard matrix.

The practical significance of Kronecker product structure is that it allows computations on a large matrix to be reduced to computations on smaller matrices. For example, suppose and

are Hermitian positive definite matrices and

, which can be shown to be Hermitian positive definite from the properties mentioned above. If

and

are Cholesky factorizations then

so , which is easily seen to be triangular with positive diagonal elements, is the Cholesky factor of

. If

and

are

then forming

and computing its Cholesky factorization costs

flops, whereas

and

can be computed in

flops. If we want to solve a linear system

this can be done using

and

without explicitly form

.

Vec Operator

The vec operator stacks the columns of a matrix into one long vector: if then

. The vec operator and the Kronecker product interact nicely: for any

,

, and

for which the product

is defined,

This relation allows us to express a linear system in the usual form “

”.

Kronecker Sum

The Kronecker sum of and

is defined by

. The eigenvalues of

are

,

,

, where the

are the eigenvalues of

and the

are those of

.

The Kronecker sum arises when we apply the vec operator to the matrix :

Kronecker sum structure also arises in finite difference discretizations of partial differential equations, such as when Poisson’s equation is discretized on a square by the usual five-point operator.

Vec-Permutation Matrix

Since for the vectors

and

contain the same

elements in different orders, we must have

for some permutation matrix

. This matrix is called the the vec-permutation matrix, and is also known as the commutation matrix.

Kronecker multiplication is not commutative, that is, in general, but

and

do contain the same elements in different orders. In fact, the two matrices are related by row and column permutations: if

and

then

This relation can be obtained as follows: for ,

Since these equalities hold for all , we have

, from which the relation follows on using

, which can be obtained by replacing

by

in the definition of vec.

An explicit expression for the the vec-permutation matrix is

where is the

th unit vector.

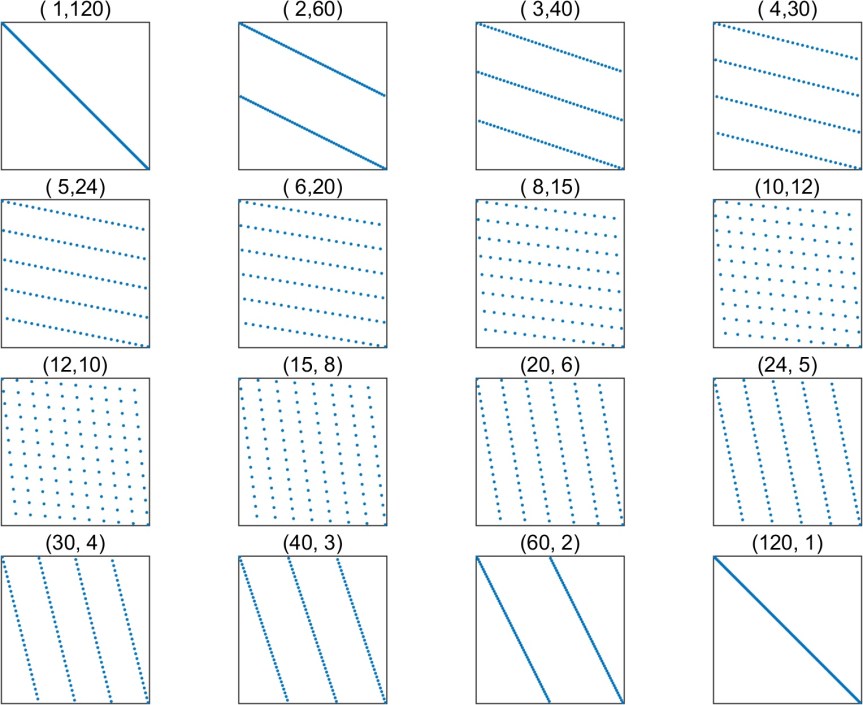

The following plot shows the sparsity patterns of all the vec permutation matrices with

, where the title of each subplot is

.

MATLAB

In MATLAB the Kronecker product can be computed as

kron(A,B) and is obtained by indexing with a colon:

A(:). Be careful using kron as it can generate very large matrices!

Historical Note

The Kronecker product is named after Leopold Kronecker (1823–1891). Henderson et al. (1983) suggest that it should be called the Zehfuss product, after Johann Georg Zehfuss (1832–1891), who obtained the result for

and

in 1858.

References

This is a minimal set of references, which contain further useful references within.

- Per Christian Hansen, James G. Nagy, and Dianne P. O’Leary, Deblurring Images: Matrices, Spectra, and Filtering, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2006.

- Harold V. Henderson, Friedrich Pukelsheim, and Shayle R. Searle, On the History of the Kronecker Product, Linear and Multilinear Algebra 14(2), 113–120, 1983.

- Harold V. Henderson and Shayle R. Searle, The Vec-Permutation Matrix, the Vec Operator and Kronecker Products: A Review, Linear and Multilinear Algebra 9, 271–288, 1981. Note: the definition of

gives the transpose of

as we have defined it.

- Jan R. Magnus and Heinz Neudecker, The Commutation Matrix: Some Properties and Applications, Ann. Statist. 7, 381–394, 1979

- Roger Horn and Charles Johnson, Topics in Matrix Analysis, Cambridge University Press, 1991. Chapter 4.

- Charles F. Van Loan, The Ubiquitous Kronecker Product, J. Comput. Appl. Math. 123(1–2), 85–100, 2000.

Related Blog Posts

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.

Footnotes:

The symbol is typed in

as

\otimes.