The exponential of a square matrix is defined by the power series (introduced by Laguerre in 1867)

That the series converges follows from the convergence of the series for scalars. Various other formulas are available, such as

The matrix exponential is always nonsingular and .

Much interest lies in the connection between and

. It is easy to show that

if

and

commute, but commutativity is not necessary for the equality to hold. Series expansions are available that relate

to

for general

and

, including the Baker–Campbell–Hausdorff formula and the Zassenhaus formula, both of which involve the commutator

. For Hermitian

and

the inequality

was proved independently by Golden and Thompson in 1965.

Especially important is the relation

for integer , which is used in the scaling and squaring method for computing the matrix exponential.

Another important property of the matrix exponential is that it maps skew-symmetric matrices to orthogonal ones. Indeed if then

This is a special case of the fact that the exponential maps elements of a Lie algebra into the corresponding Lie group.

The matrix exponential plays a fundamental role in linear ordinary differential equations (ODEs). The vector ODE

has solution , while the solution of the ODE in

matrices

is .

In control theory, the matrix exponential is used in converting from continuous time dynamical systems to discrete time ones. Another application of the matrix exponential is in centrality measures for nodes in networks.

Many methods have been proposed for computing the matrix exponential. See the references for details.

References

This is a minimal set of references, which contain further useful references within.

- Awad H. Al-Mohy and Nicholas J. Higham, A New Scaling and Squaring Algorithm for the Matrix Exponential, SIAM J. Matrix Anal. Appl. 31(3), 970–989, 2009.

- Ernesto Estrada and Philip A. Knight, A First Course in Network Theory, Oxford University Press, 2015.

- Nicholas J. Higham, Functions of Matrices: Theory and Computation, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2008. (Chapter 10).

- Cleve B. Moler and Van Loan, Charles F., Nineteen Dubious Ways to Compute the Exponential of a Matrix, Twenty-Five years Later, SIAM Rev. 45(1), 3–49, 2003.

- Gilbert Strang, The Matrix Exponential (video), 2016.

Related Blog Posts

- A Balancing Act for the Matrix Exponential by Cleve Moler (2012)

- The Improved MATLAB Functions Expm and Logm (2016)

- Update of Catalogue of Software for Matrix Functions (2020)

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.

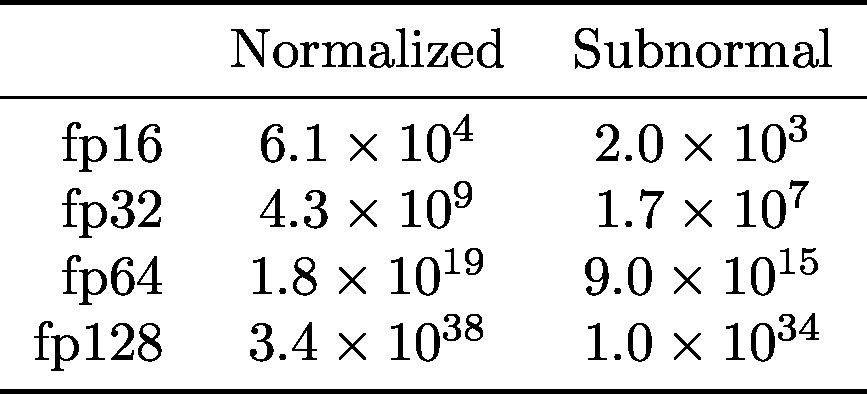

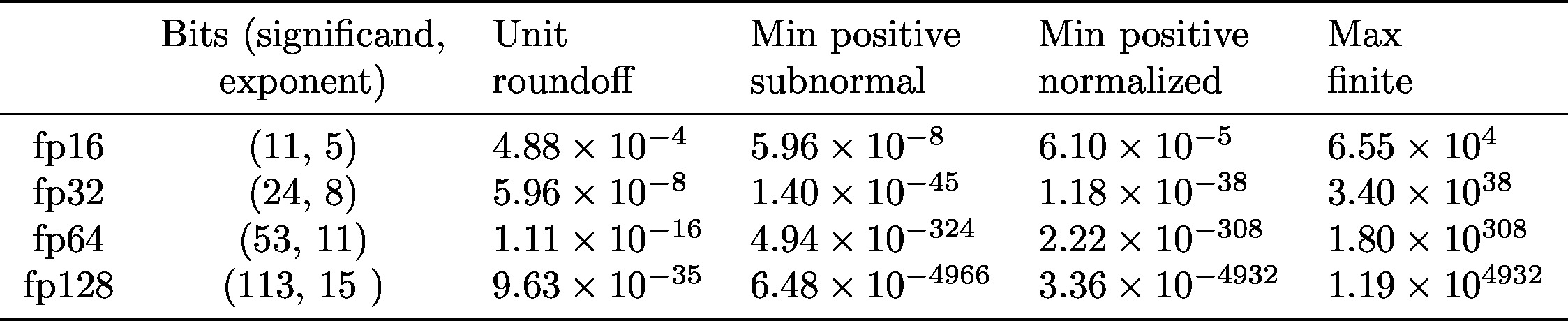

Fp32 (single precision) and fp64 (double precision) were in the 1985 standard; fp16 (half precision) and fp128 (quadruple precision) were introduced in 2008. Fp16 is defined only as a storage format, though it is widely used for computation.

Fp32 (single precision) and fp64 (double precision) were in the 1985 standard; fp16 (half precision) and fp128 (quadruple precision) were introduced in 2008. Fp16 is defined only as a storage format, though it is widely used for computation.