In many situations we need to evaluate the derivative of a function but we do not have an explicit formula for the derivative. The complex step approximation approximates the derivative (and the function value itself) from a single function evaluation. The catch is that it involves complex arithmetic.

For an analytic function we have the Taylor expansion

where is the imaginary unit. Assume that

maps the real line to the real line and that

and

are real. Then equating real and imaginary parts in

gives

and

. This means that for small

, the approximations

both have error . So a single evaluation of

at a complex argument gives, for small

, a good approximation to

, as well as a good approximation to

if we need it.

The usual way to approximate derivatives is with finite differences, for example by the forward difference approximation

This approximation has error so it is less accurate than the complex step approximation for a given

, but more importantly it is prone to numerical cancellation. For small

,

and

agree to many significant digits and so in floating-point arithmetic the difference approximation suffers a loss of significant digits. Consequently, as

decreases the error in the computed approximation eventually starts to increase. As numerical analysis textbooks explain, the optimal choice of

that balances truncation error and rounding errors is approximately

where is the unit roundoff. The optimal error is therefore of order

.

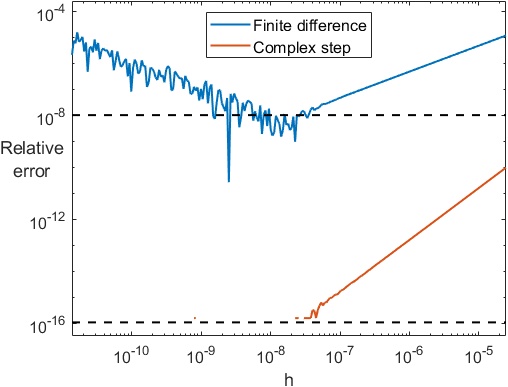

A simple example illustrate these ideas. For the function with

, we plot in the figure below the relative error for the finite difference, in blue, and the relative error for the complex step approximation, in orange, for

ranging from about

to

. The dotted lines show

and

. The computations are in double precision (

). The finite difference error decreases with

until it reaches about

; thereafter the error grows, giving the characteristic V-shaped error curve. The complex step error decreases steadily until it is of order

for

, and for each

it is about the square of the finite difference error, as expected from the theory.

Remarkably, one can take extremely small in the complex step approximation (e.g.,

) without any ill effects from roundoff.

The complex step approximation carries out a form of approximate automatic differentiation, with the variable functioning like a symbolic variable that propagates through the computations in the imaginary parts.

The complex step approximation applies to gradient vectors and it can be extended to matrix functions. If is analytic and maps real

matrices to real

matrices and

and

are real then (Al-Mohy and Higham, 2010)

where is the Fréchet derivative of

at

in the direction

. It is important to note that the method used to evaluate

must not itself use complex arithmetic (as methods based on the Schur decomposition do); if it does, then the interaction of those complex terms with the much smaller

term can lead to damaging subtractive cancellation.

The complex step approximation has also been extended to higher derivatives by using “different imaginary units” in different components (Lantoine et al., 2012).

Here are some applications where the complex step approximation has been used.

- Sensitivity analysis in engineering applications (Giles et al., 2003).

- Approximating gradients in deep learning (Goodfellow et al., 2016).

- Approximating the exponential of an operator in option pricing (Ackerer and Filipović, 2019).

Software has been developed for automatically carrying out the complex step method—for example, by Shampine (2007).

The complex step approximation has been rediscovered many times. The earliest published appearance that we are aware of is in a paper by Squire and Trapp (1998), who acknowledge earlier work of Lyness and Moler on the use of complex variables to approximate derivatives.

References

This is a minimal set of references, which contain further useful references within.

- Awad H. Al-Mohy and Nicholas J. Higham, The Complex Step Approximation to the Fréchet Derivative of a Matrix Function, Numer. Algorithms 53, 133–148, 2010.

- Damien Ackerer and Damir Filipović, Option Pricing with Orthogonal Polynomial Expansions, Mathematical Finance 30, 47–84, 2019.

- Michael B. Giles, Mihai C. Duta, Jens-Dominik Möuller, and Niles A. Pierce, Algorithm Developments for Discrete Adjoint Methods, AIAA Journal 4(2), 198–205, 2003.

- Ian Goodfellow, Yoshua Bengio, and Aaron Courville, Deep Learning, MIT Press, 2016. Page 434.

- Gregory Lantoine, Ryan P. Russell P., and Thierry Dargent, Using Multicomplex Variables for Automatic Computation of High-Order Derivatives, ACM Trans. Math. Software 38, 16:1–16:21, 2012.

- L. F. Shampine, Accurate Numerical Derivatives in MATLAB, ACM Trans. Math. Software 33,26:1–26:17, 2007.

- W. Squire and G. E. Trapp (1998), Using Complex Variables to Estimate Derivatives of Real Functions, SIAM Rev., 40(1), 110–112.

Related Blog Posts

- Complex Step Differentiation by Cleve Moler (2013)

- Differentiation With(out) a Difference (2018)

- What Is a Fréchet Derivative? (2020)

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.

This is the coolest new thing I learned in blog post in I-don’t-know-how-long.

Thanks!

Neat! Brings to mind dual numbers but without nilpotency (https://en.wikipedia.org/wiki/Dual_number).

Beautiful trick! Good to know. By the way, in Goodfellow, Bengio, and Courville, 2016, it appears on p. 427, rather than p. 434 (eqs. 11.8 and 11.9).

Yes – thanks.