The trace of an matrix is the sum of its diagonal elements:

. The trace is linear, that is,

, and

.

A key fact is that the trace is also the sum of the eigenvalues. The proof is by considering the characteristic polynomial . The roots of

are the eigenvalues

of

, so

can be factorized

and so . The Laplace expansion of

shows that the coefficient of

is

. Equating these two expressions for

gives

A consequence of (1) is that any transformation that preserves the eigenvalues preserves the trace. Therefore the trace is unchanged under similarity transformations: for any nonsingular

.

An an example of how the trace can be useful, suppose is a symmetric and orthogonal

matrix, so that its eigenvalues are

. If there are

eigenvalues

and

eigenvalues

then

and

. Therefore

and

.

Another important property is that for an matrix

and an

matrix

,

(despite the fact that in general). The proof is simple:

This simple fact can have non-obvious consequences. For example, consider the equation in

matrices. Taking the trace gives

, which is a contradiction. Therefore the equation has no solution.

The relation (2) gives for

matrices

,

, and

, that is,

So we can cyclically permute terms in a matrix product without changing the trace.

As an example of the use of (2) and (3), if and

are

-vectors then

. If

is an

matrix then

can be evaluated without forming the matrix

since, by (3),

.

The trace is useful in calculations with the Frobenius norm of an matrix:

where denotes the conjugate transpose. For example, we can generalize the formula

for a complex number to an

matrix

by splitting

into its Hermitian and skew-Hermitian parts:

where and

. Then

If a matrix is not explicitly known but we can compute matrix–vector products with it then the trace can be estimated by

where the vector has elements independently drawn from the standard normal distribution with mean

and variance

. The expectation of this estimate is

since for

and

for all

, so

. This stochastic estimate, which is due to Hutchinson, is therefore unbiased.

References

- Haim Avron and Sivan Toledo, Randomized Algorithms for Estimating the Trace of an Implicit Symmetric Positive Semi-definite Matrix, J. ACM 58, 8:1-8:34, 2011.

Related Blog Posts

- What Is a Matrix Norm? (2021)

- What Is an Eigenvalue? (2022)

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.

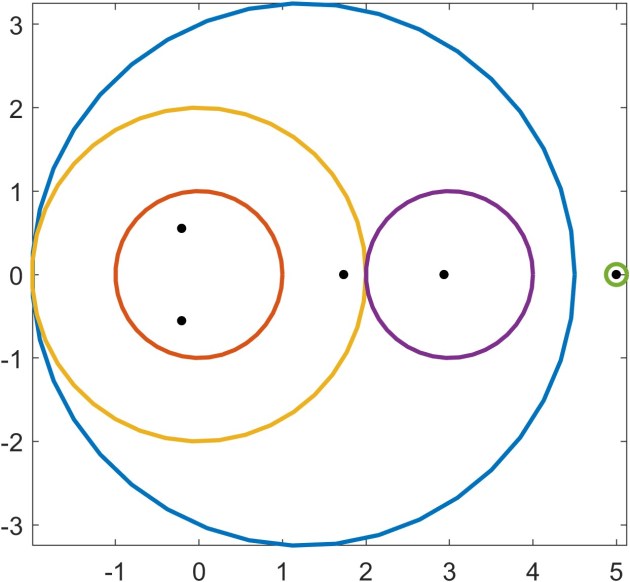

The eigenvalues—three real and one complex conjugate pair—are the black dots. It happens that each disc contains an eigenvalue, but this is not always the case. For the matrix

The eigenvalues—three real and one complex conjugate pair—are the black dots. It happens that each disc contains an eigenvalue, but this is not always the case. For the matrix and the blue disc does not contain an eigenvalue. The next result, which is proved by a continuity argument, provides additional information that increases the utility of Gershgorin’s theorem. In particular it says that if a disc is disjoint from the other discs then it contains an eigenvalue.

and the blue disc does not contain an eigenvalue. The next result, which is proved by a continuity argument, provides additional information that increases the utility of Gershgorin’s theorem. In particular it says that if a disc is disjoint from the other discs then it contains an eigenvalue.