The IEEE Standard 754, published in 1985 and revised in 2008 and 2019, is a standard for binary and decimal floating-point arithmetic. The standard for decimal arithmetic (IEEE Standard 854) was separate when it was first published in 1987, but it was included with the binary standard from 2008. We focus here on the binary part of the standard.

The standard specifies floating-point number formats, the results of the basic floating-point operations and comparisons, rounding modes, floating-point exceptions and their handling, and conversion between different arithmetic formats.

A binary floating-point number is represented as

where is the precision and

is the exponent. The significand

is an integer satisfying

. Numbers with

are called normalized. Subnormal numbers, for which

and

, are supported.

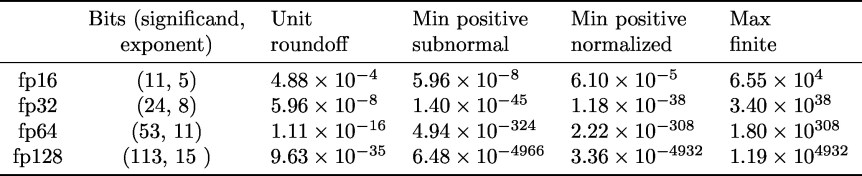

Four formats are defined, whose key parameters are summarized in the following table. The second column shows the number of bits allocated to store the significand and the exponent. I use the prefix “fp” instead of the prefix “binary” used in the standard. The unit roundoff is .

Fp32 (single precision) and fp64 (double precision) were in the 1985 standard; fp16 (half precision) and fp128 (quadruple precision) were introduced in 2008. Fp16 is defined only as a storage format, though it is widely used for computation.

Fp32 (single precision) and fp64 (double precision) were in the 1985 standard; fp16 (half precision) and fp128 (quadruple precision) were introduced in 2008. Fp16 is defined only as a storage format, though it is widely used for computation.

The size of these different number systems varies greatly. The next table shows the number of normalized and subnormal numbers in each system.

We see that while one can easily carry out a computation on every fp16 number (to check that the square root function is correctly computed, for example), it is impractical to do so for every double precision number.

A key feature of the standard is that it is a closed system, thanks to the inclusion of NaN (Not a Number) and (usually written as inf in programing languages) as floating-point numbers: every arithmetic operation produces a number in the system. A NaN is generated by operations such as

Arithmetic operations involving a NaN return a NaN as the answer. The number obeys the usual mathematical conventions regarding infinity, such as

This means, for example, that evaluates as

when

.

The standard specifies that all arithmetic operations (including square root) are to be performed as if they were first calculated to infinite precision and then rounded to the target format. A number is rounded to the next larger or next smaller floating-point number according to one of four rounding modes:

- round to the nearest floating-point number, with rounding to even (rounding to the number with a zero least significant bit) in the case of a tie;

- round towards plus infinity and round towards minus infinity (used in interval arithmetic); and

- round towards zero (truncation, or chopping).

For round to nearest it follows that

where denotes the computed result. The standard also includes a fused multiply-add operation (FMA),

. The definition requires it to be computed with just one rounding error, so that

is the rounded version of

, and hence satisfies

FMAs are supported in some hardware and are usually executed at the same speed as a single addition or multiplication.

The standard recommends the provision of correctly rounded exponentiation () and transcendental functions (

,

,

,

, etc.) and defines domains and special values for them, but these functions are not required.

A new feature of the 2019 standard is augmented arithmetic operations, which compute along with the error

, for

. These operations are useful for implementing compensated summation and other special high accuracy algorithms.

William (“Velvel”) Kahan of the University of California at Berkeley received the 1989 ACM Turing Award for his contributions to computer architecture and numerical analysis, and in particular for his work on IEEE floating-point arithmetic standards 754 and 854.

References

This is a minimal set of references, which contain further useful references within.

- D. Goldberg, What Every Computer Scientist Should Know About Floating-Point Arithmetic, ACM Computing Surveys 23, 5–48, 1991.

- Nicholas J. Higham, Accuracy and Stability of Numerical Algorithms, second edition, Society for Industrial and Applied Mathematics, Philadelphia, PA, USA, 2002.

- IEEE Standard for Floating-Point Arithmetic, IEEE Std 754-2019 (Revision of IEEE 754-2008), The Institute of Electrical and Electronics Engineers, New York, 2019.

- Jean-Michel Muller, Nicolas Brunie, Florent de Dinechin, Claude-Pierre Jeannerod, Mioara Joldes, Vincent Lefèvre, Guillaume Melquiond, Nathalie Revol, and Serge Torres, Handbook of Floating-Point Arithmetic, second edition, Birkhäuser, Boston, MA, 2018.

Related Blog Posts

- A Multiprecision World (2017)

- Book Review Revisited: Overton’s Numerical Computing with IEEE Floating Point Arithmetic (2014)

- Floating Point Numbers by Cleve Moler (2014)

- Half Precision Arithmetic: fp16 Versus bfloat16 (2018)

- The Rise of Mixed Precision Arithmetic (2015)

- What Is Rounding? (2020)

- What Is Floating-Point Arithmetic? (2020)

This article is part of the “What Is” series, available from https://nhigham.com/category/what-is and in PDF form from the GitHub repository https://github.com/higham/what-is.