The Princeton Companion to Applied Mathematics has a 23-page Part I article “History of Applied Mathematics”, but apart from that it does not contain any articles with a historical or biographical emphasis. In designing the book we felt that the articles in Part II, “Equations, Laws and Functions of Applied Mathematics”, would provide a link into the history of applied mathematics through the various equations, laws, and functions included, most of which are eponymous.

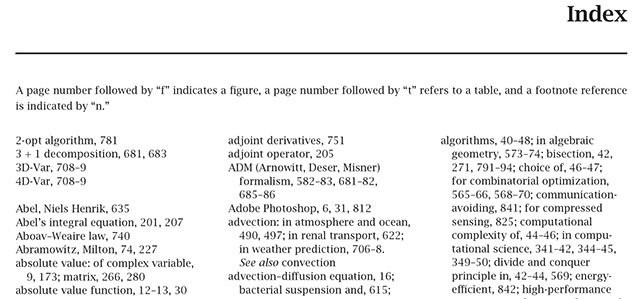

The index was produced by a professional indexer, who made a judgement on which of the many names in the book had significant enough mentions to index. A phrase “Newton’s method” would not generate an index entry for “Newton”, but a phrase describing something that Newton did might.

The index revealed some interesting features. First, there are many entries for famous mathematicians and scientists: 76 in total, ranging from to Niels Henrik Abel to Thomas Young. This means that even though there are no biographical articles, authors have included plenty of historical and biographical snippets. Second, many of the mathematicians might equally well have been mentioned in a book on pure mathematics (Halmos, Poincaré, Smale, Weil), which indicates the blurred boundary between pure and applied mathematics.

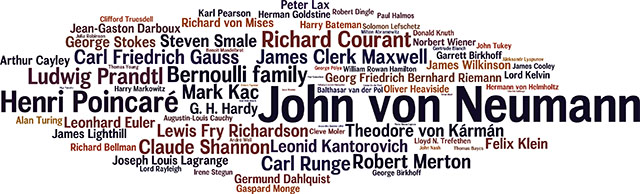

A third feature of the index is that the number of locators for the mathematicians and scientists that it contains varies greatly, from 1 to 20. We can use this to produce a highly non-scientific ranking. Here is a Wordle, in which the font size is proportional to the number of times that each name occurs.

The table of occurrences, which begins

| von Neumann, John | 20 |

| Poincaré, Henri | 12 |

| Bernoulli family | 9 |

| Courant, Richard | 9 |

| Prandtl, Ludwig | 9 |

| Gauss, Carl Friedrich | 8 |

| Kac, Mark | 8 |

| Maxwell, James Clerk | 8 |

| Merton, Robert | 8 |

| Runge, Carl | 8 |

| Shannon, Claude | 8 |

can be found in this PDF file.

John von Neumann (1903–-1957) emerges as The Companion’s “most mentioned” applied mathematician. Indeed von Neumann was a hugely influential mathematician who contributed to many fields, as his index entry shows:

von Neumann, John: applied mathematics and, 56–59, 73; computational science and, 336–37, 350; economics and, 71, 644, 650, 869; error analysis and, 77; foams and, 740; Monte Carlo method and, 57; random number generation and, 762; shock waves and, 720; spectral theory and, 239–40, 426

von Neumann’s work has strong connections with my own research interests. With Herman Goldstine he published an important rounding error analysis of Gaussian elimination for inverting a symmetric positive definite matrix. He also introduced the alternating projections method that I have used to solve the nearest correlation matrix problem. And he derived important result on unitarily invariant matrix norms and singular value inequalities

More about von Neumann can be found in the biographies

- Aspray, W. (1990). John von Neumann and the Origins of Modern Computing. MIT Press.

- Poundstone, W. (1992). Prisoner’s Dilemma. Oxford University Press.

I learned about Anderson acceleration in the minisymposium

I learned about Anderson acceleration in the minisymposium