BibTeX is an important part of my workflow in writing papers and books. Here are three tips for getting the most out of it.

1. DOI and URL Links from Bibliography Entries

A digital object identifier (DOI) provides a persistent link to an object on the web, via the server at http://dx.doi.org. Most scholarly journals now assign DOIs to papers, and many papers from the past have been retrofitted with them. Books can also have DOIs, as in SpringerLink or the SIAM ebook program.

It is very convenient for a reader of a PDF document to be able to access items from the bibliography by clicking on part of the item. The links can be constructed from a DOI, and if one does not exist a URL can be used instead. How can we produce such links automatically with BibTeX? Simply add fields doi or url and use an appropriate BibTeX style (BST) file. I’m not aware of any standard BST file that handles these fields, so I modified my own BST file using these tips. The result, myplain2-doi.bst, is available in this GitHub repository. Example bib entries that work with it are as follows.

@article{ahr13,

author = "Awad H. Al-Mohy and Nicholas J. Higham and Samuel D. Relton",

title = "Computing the {Fr{\'e}chet} Derivative of the Matrix Logarithm

and Estimating the Condition Number",

journal = "SIAM J. Sci. Comput.",

volume = 35,

number = 4,

pages = "C394-C410",

year = 2013,

doi = "10.1137/120885991",

created = "2012.07.05",

updated = "2013.08.06"

}

@article{hpp09,

author = "Horn, Roger A. and Piazza, Giuseppe and Politi, Tiziano",

title = "Explicit Polar Decompositions of Complex Matrices",

journal = "Electron. J. Linear Algebra",

volume = "18",

pages = "693-699",

year = "2009",

url = "https://eudml.org/doc/233457",

created = "2013.01.02",

updated = "2015.07.15"

}

The journal in which the second example appears is one that does not itself provide DOIs or URLs for papers. However, the European Digital Mathematics Library provides URLs for this journal, so I have used the appropriate one from there.

My BST file hyperlinks the title of each item to the corresponding DOI or URL. For an example of the style in use, see the typeset version of njhigham.bib, for which here is a direct link. An alternative style is simply to print the DOI or URL with a hyperlink.

2. Web page from Bib File via BibBase

Once you have made a bib file of a group of publications a natural question is how you can automatically generate a web page that displays the publications in an easily browsable format. A great way to do this is with BibBase. You simply point BibBase at your online bib file and it generates some JavaScript, PHP, or iFrame code. When you include that code in your a web page it displays the Bib entries sorted by year, with each DOI or URL field made clickable and each BibTeX entry revealable. A menu allows sorting by author, type, or year and the list can be folded. My bib file njhigham.bib formatted by BibBase is available here.

BibBase is free to use. It was first released a few years ago and is still being developed, with improved support for LaTeX and for special characters added recently. Keep up to date with developments by following the BibBase Twitter feed.

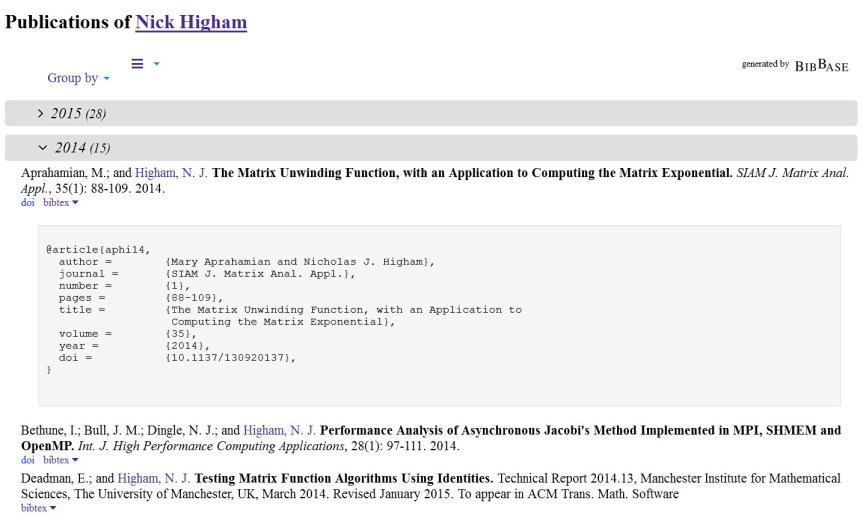

Here are two screenshots. The first shows part of the default layout, with outputs from 2015 folded and one bib entry revealed.

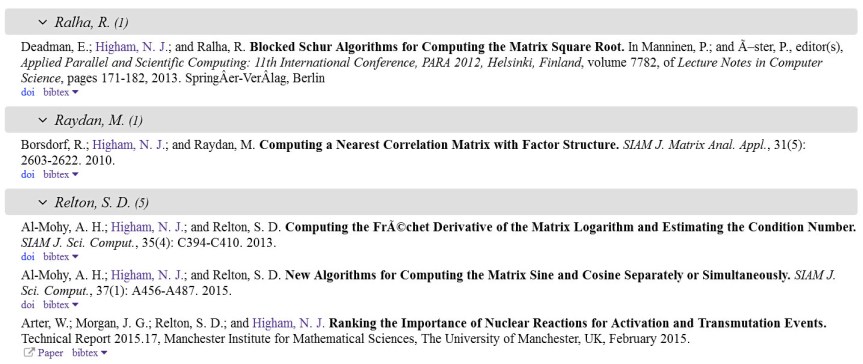

The second screenshot shows part of the list ordered by author.

3. Bib Entry from DOI

If you happen to know the DOI of a paper and want to obtain a bib entry, go to the doi2bib service and type in your DOI. For further information see this blog post and follow the doi2bib Twitter feed.